NLiPsCalib: An Efficient Calibration Framework for High-Fidelity 3D Reconstruction of Curved Visuotactile Sensors

Abstract

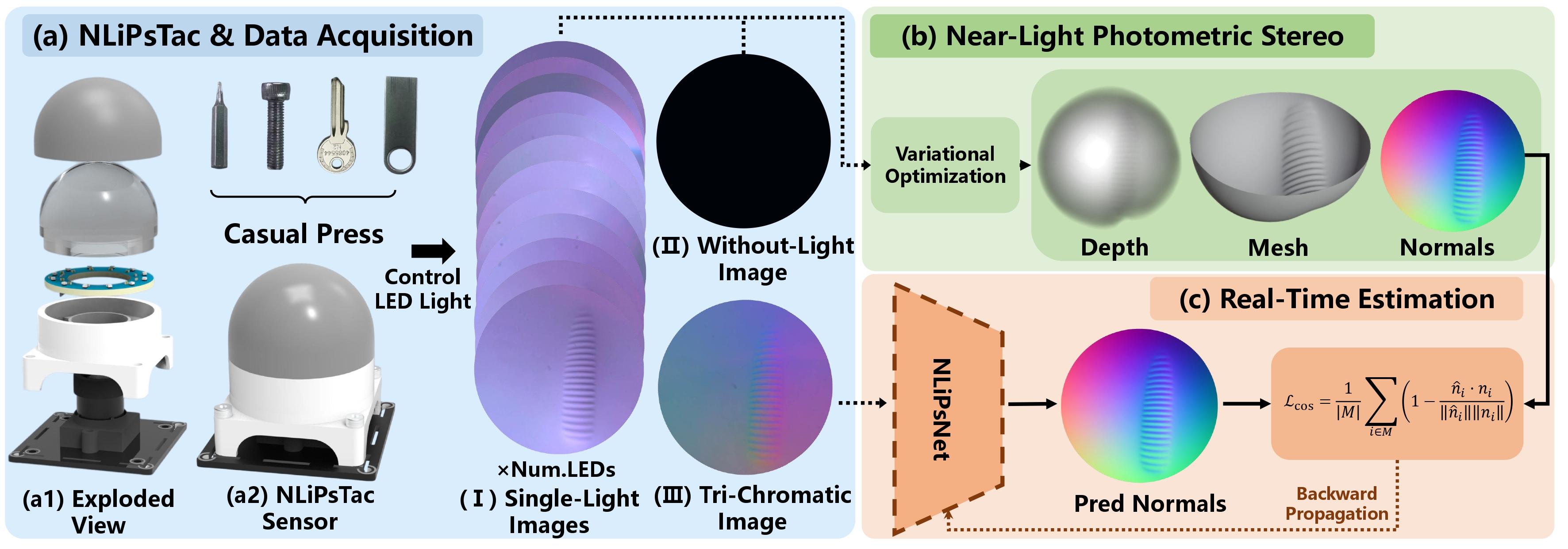

Recent advances in tactile sensing increasingly employ biomimetic, fingertip-like curved surfaces to enhance sensorimotor capabilities. Although such curved visuotactile sensors enable more conformal object contact, their perceptual quality is often degraded by non-uniform illumination caused by near-field optical effects, which reduces reconstruction accuracy and typically necessitates calibration. Existing calibration methods commonly rely on customized indenters and specialized devices to collect large-scale photometric data, but these processes are expensive and labor-intensive. To address these limitations, we present NLiPsCalib, a physics-consistent and efficient calibration framework for curved visuotactile sensors. NLiPsCalib integrates controllable near-field light sources and leverages Near-Light Photometric Stereo (NLiPs) to estimate contact geometry, simplifying calibration to just a few simple contacts with everyday objects. We further introduce NLiPsTac, a controllable-light-source tactile sensor developed to validate our framework. Experimental results demonstrate that our approach enables high-fidelity 3D reconstruction across diverse curved form factors with a simple calibration procedure. We emphasize that our approach lowers the barrier to developing customized visuotactile sensors of diverse geometries, thereby making visuotactile sensing more accessible to the broader community.

Pipeline

We introduce NLiPsCalib, a unified sensor calibration framework that derives ground-truth geometries directly from multi-source photometric cues. The goal of NLiPsCalib is to infer surface normals n using near-light photometric stereo. This approach offers two key advantages: it enables direct estimation without requiring costly equipment such as CNC-machined probes or robotic arms, and it avoids spatial misalignment between ground-truth normals n and intensity measurements (x, y, r, g, b). These advantages allow the creation of high-fidelity paired calibration datasets for learning f. Building on this dataset, we further present NLiPsNet, a neural network for real-time normal prediction during deployment.

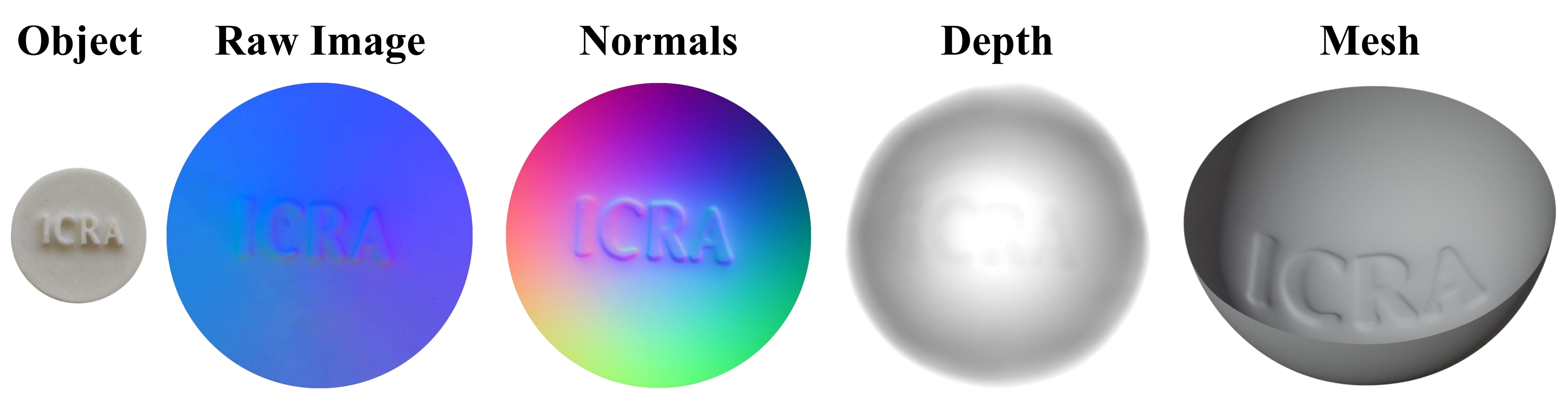

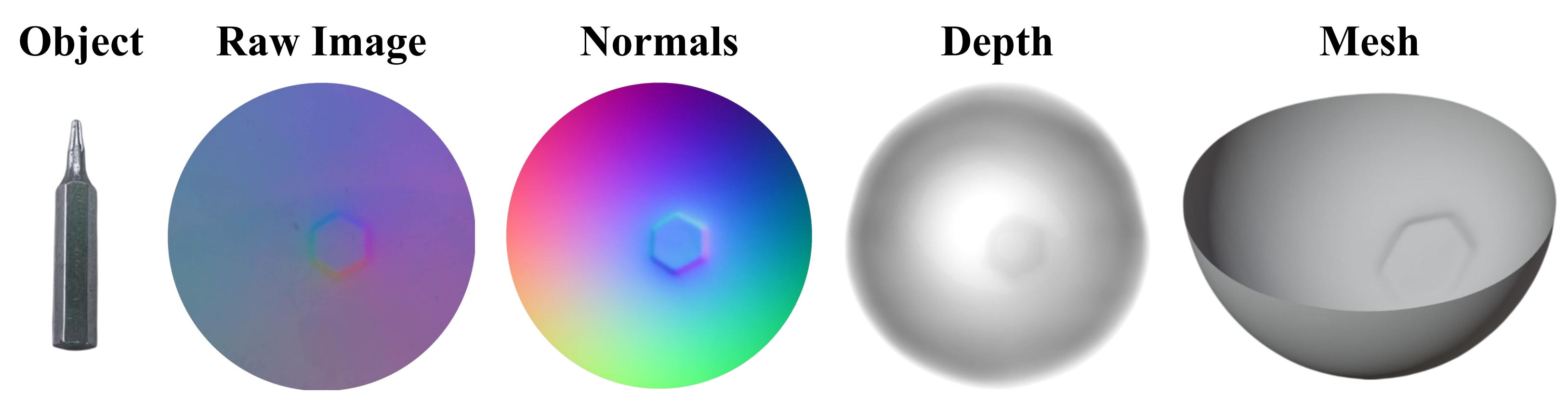

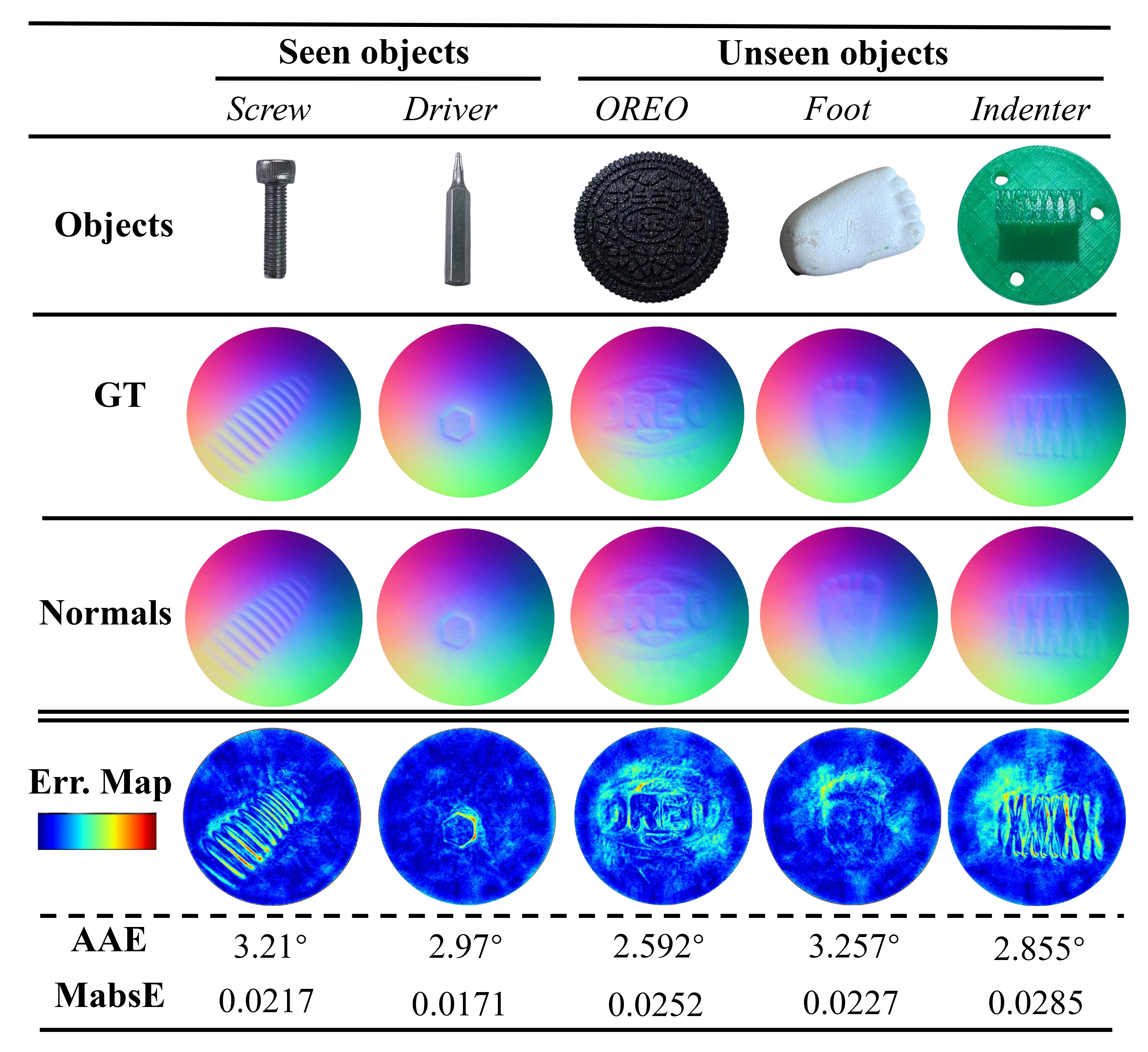

Calibration Results

We use different objects to press NLiPsTac and calibrate it separately

ICRA ICON

Driver

Screw

Circuit Board

Sphere Probe

Calibration Accuracy

Real-Time Reconstruct Results

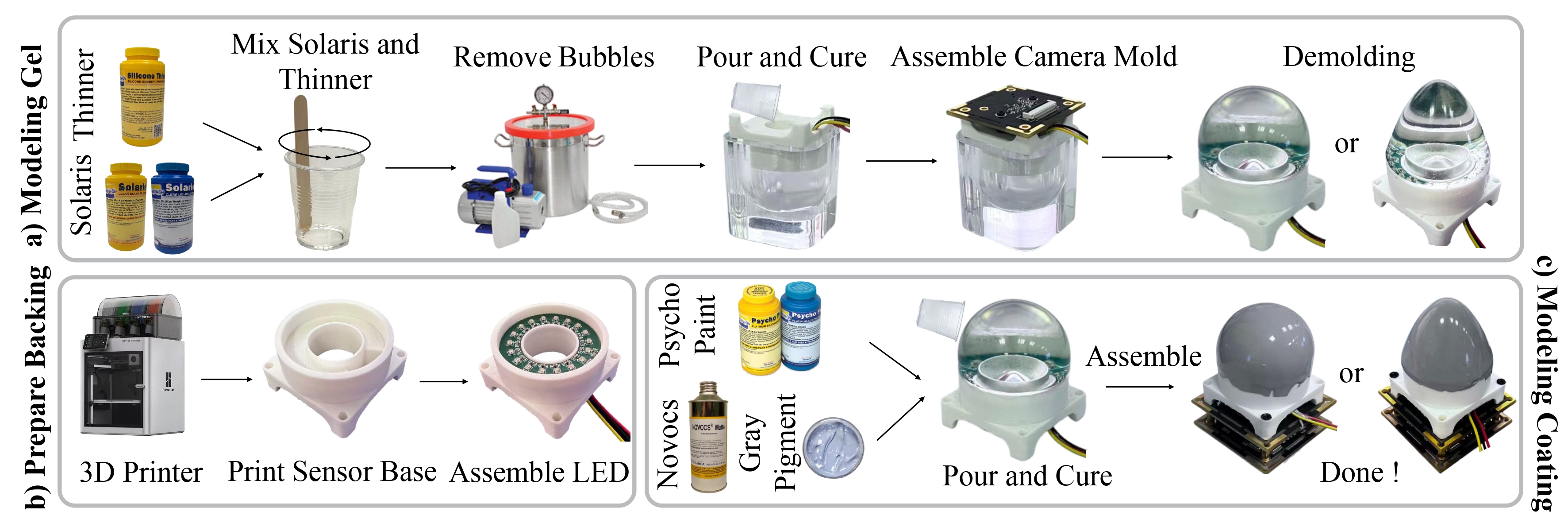

Sensor Fabrication (Video Coming Soon)